Azure Databricks Creation using PowerShell and Terraform

Azure Databricks Creation using PowerShell and Terraform

Introduction

Over the last couple of years, Databricks have evolved a lot and a lot of enhancements happened in that space as well. As I see a lot of options are available to create PowerShell or Terraform or Using Azure CLI. As an experiment, I tried using all 3 of them to see how they can be integrated well.

The purpose of this article is to create Databricks using PowerShell, Terraform and using Rest API calls.

Content

- azure-pipelines.yml — Azure pipelines YML file for to Build and deploy it to Azure cloud

- checkcluster.ps1 — This file is to check cluster creation and its status

- createcluster.ps1 — This file is responsible to create cluster under Databricks.

- createresourcegroup.ps1 — This file is for creating a new resource group

- databricks-workspace-template.json —This file is responsible for the actual JSON body of Databricks workspace and its associated components which will be a part of it.

- databrickstoken.ps1 → This file is responsible is to get a token from Azure and assign it to Databricks for secure login.

- main.tf → This file contains your actual cluster creation, RG creation and deployment mode.

- newworkspace.ps1 → This file is responsible for creating a new workspace in Databricks.

- validatetemplate.ps1 → This file will monitor to see if the data bricks got created successfully or is there any errors during creation.

- variables.tf → This file contains different properties which will be a part of any terraform call.

- versions.tf → This file contains provider details along with the version.

Files Content

Requirements

- terraform = 2.26.0

- AppRegistration in Azure cloud

- Powershell

Build Stage

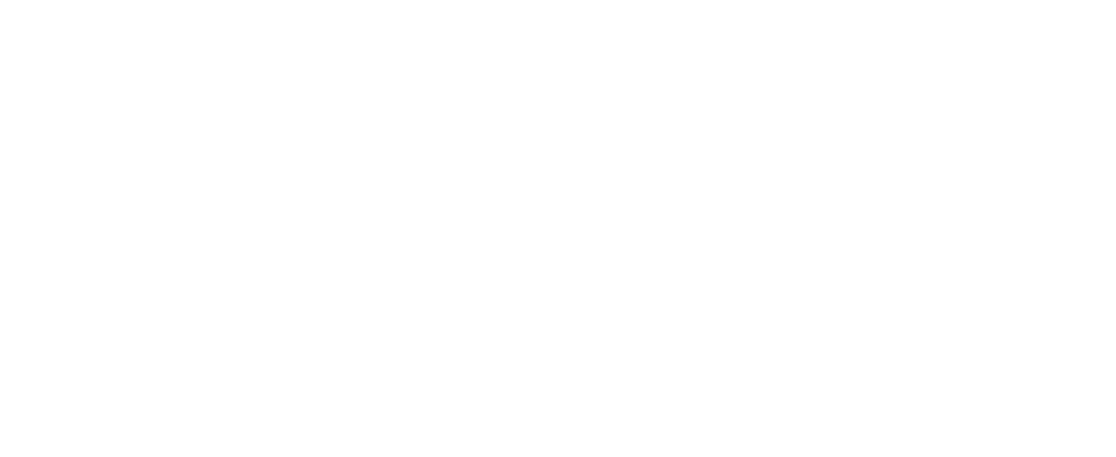

- the first step is to create a new Enterprise application in Azure, here in our case I have created a new App given the name “AzureDatabricks”. Once you create please copy ApplicationID.

2. Now let's go to our AzureDevOps git repo where are modifying the files. Please update the “databrickstoken.ps” file at line 2.

$TOKEN = (az account get-access-token — resource 2ff814a6–3304–4ab8–85cb-xxxxxxxxxx | jq — raw-output ‘.accessToken’)

3. Now let's update the same in the “Azure-pipelines.yml” file → at line 79

$TOKEN = (az account get-access-token — resource 2ff814a6–3304–4ab8–85cb-xxxxxxxxx | jq — raw-output ‘.accessToken’)

I have added Azure-pipelines is for the folks who are more comfortable working with the YAML format.

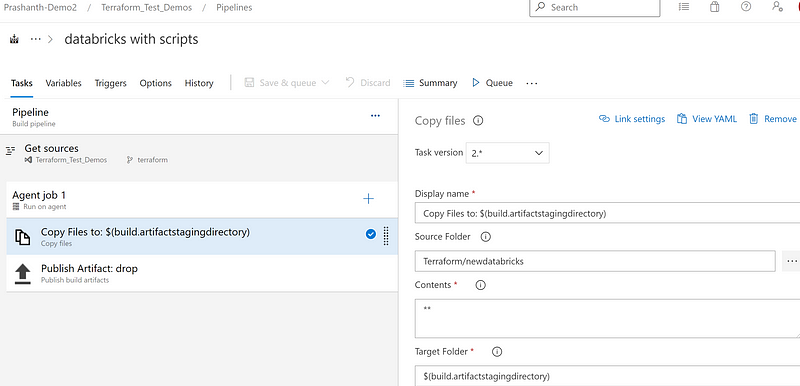

4. Now let's start creating a new pipeline, as a first step I am copying the files and creating an artefact.

and adding another task to publish them. Let's trigger a new build.

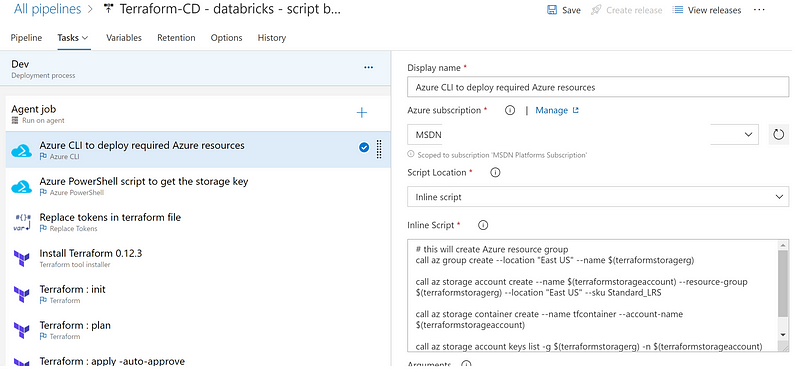

Release Stage → option 1

Let's create a new release pipeline first using simple Terraform tf files and with new workspace creation using PowerShell script.

- Let's open AzureDevOps → go to the Release phase → to create a new Release pipeline. Add a new (Build) artefact.

- As a part of the Release pipeline, I have added the below tasks to create a new Databricks cluster.

- Azure CLI task to create a new resource group and to save the .tfstate file.

- Adding Azure CLI task to get storage key.

- Replacing any tokens in Terraform file.

- Installing Terraform using Terraform Installer with 0.12.3 version.

- Terraform Init

- Terraform Plan

- and finally Terraform Apply with Auto approve option.

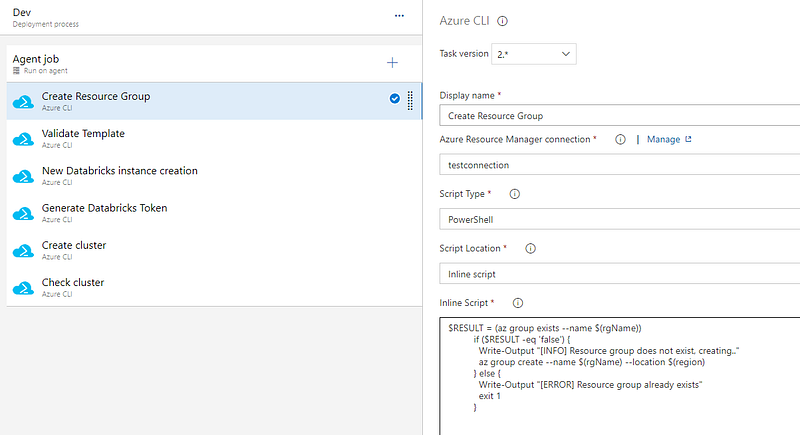

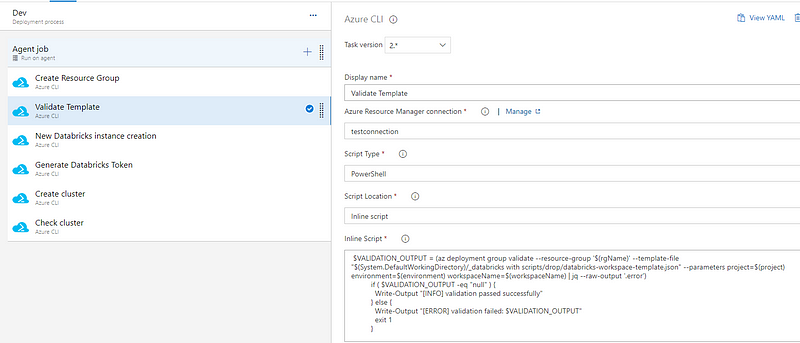

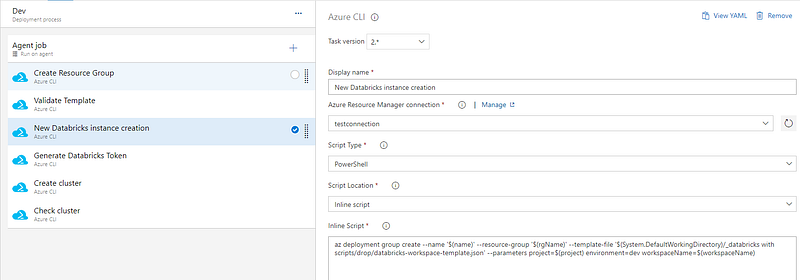

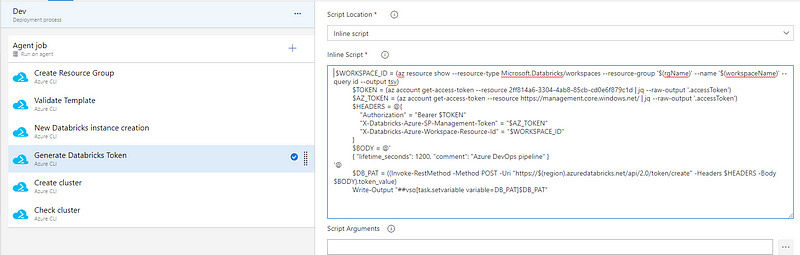

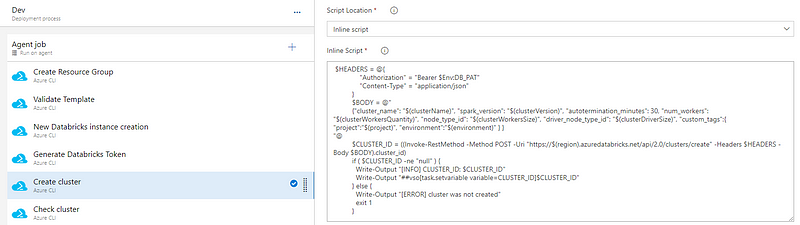

Release stage → Option2 using Azure CLI

- Creating a new resource group.

- Validating Template for any errors.

- New Databricks instance creation.

- Generating Databricks Token.

- Creating a new Cluster inside Databricks

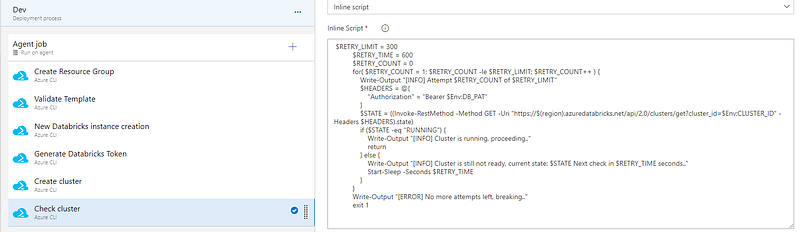

- Cluster creation status.

Validation

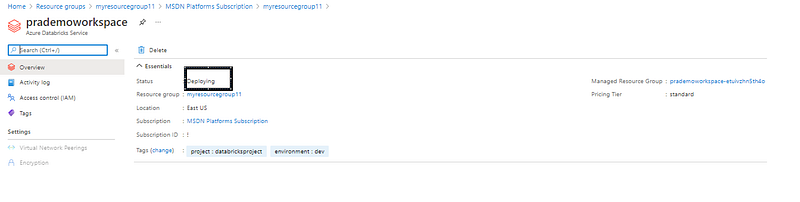

- Now let's login to Azure and start validating our new Databricks instance and its supporting components.

- Please check Databricks status under specified resource group.

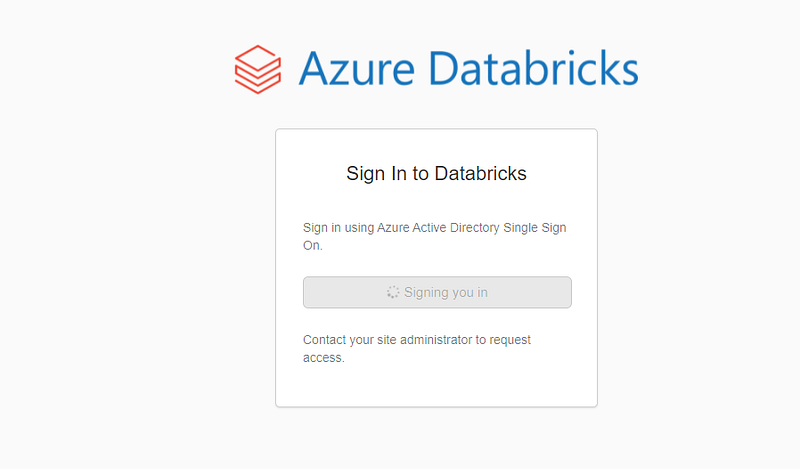

3. Next validation would be Login to Azure Databricks by clicking on Launch Workspace. Make sure it asks for your Login details → it should show the “sign in to Databricks” screen.

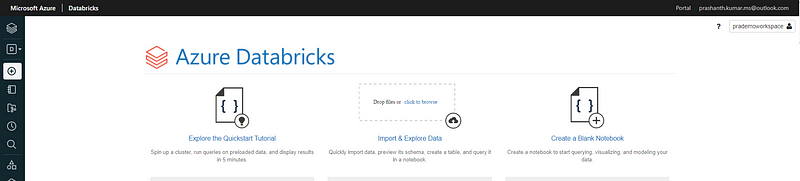

It will show the workspace

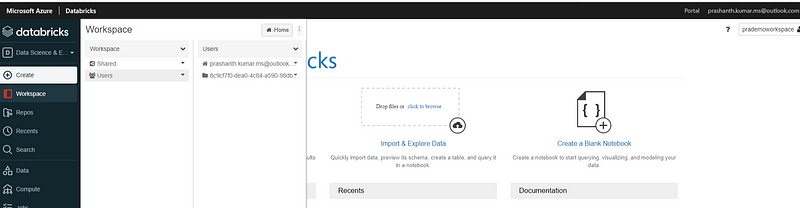

4. check Workspace → to make sure you are able to open your workspace.

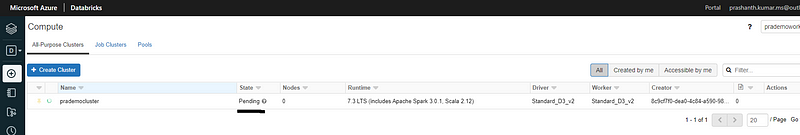

5. Check if any clusters are getting created or are ready.

(This is directly related with our task6 in our release pipeline [check cluster])

Source code Github link: learnprofile/Databricks (github.com)